k-表示具有质心约束

Dee*_*Ess 6 python algorithm k-means data-science

我正在为数据科学课程的介绍开展一个数据科学项目,我们决定解决加利福尼亚海水淡化厂的一个问题:"我们应该把k植物放在哪里以最小化邮政编码的距离?"

我们到目前为止的数据是,拉链,城市,县,流行,拉特,长,水量.

问题是,我找不到任何关于如何迫使质心被限制在海岸上的资源.我们到目前为止所考虑的是:使用普通的kmeans算法,但是一旦聚类已经稳定,就将质心移动到海岸(坏)使用具有权重的正常kmeans算法,使得沿海拉链具有无限的权重(我已经告诉这不是一个很好的解决方案)

你们有什么感想?

我会通过设置可能的中心点(即你的海岸线)来解决这个问题。\n我认为这与纳撒尼尔·索尔的

第一条评论很接近。这样,对于每次迭代,不是选择平均值,而是通过与簇的接近度来选择可能集合中的一个点。

I\xe2\x80\x99 将条件简化为只有 2 个数据列(经度和纬度),但您应该能够推断出这个概念。为了简单起见,为了演示,我基于这里的代码。

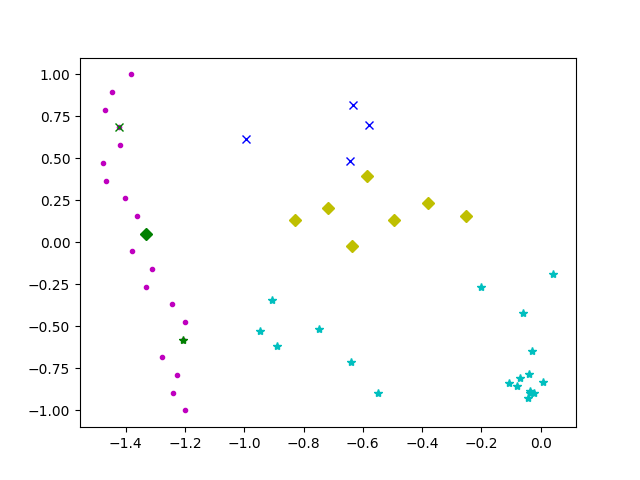

\n\n在此示例中,紫色点是海岸线上的位置。如果我理解正确的话,最佳海岸线位置应该是这样的:

\n\n

参见下面的代码:

\n\n#! /usr/bin/python3.6\n\n# Code based on:\n# https://datasciencelab.wordpress.com/2013/12/12/clustering-with-k-means-in-python/\n\nimport matplotlib.pyplot as plt\nimport numpy as np\nimport random\n\n##### Simulation START #####\n# Generate possible points.\ndef possible_points(n=20):\n y=list(np.linspace( -1, 1, n ))\n x=[-1.2]\n X=[]\n for i in list(range(1,n)):\n x.append(x[i-1]+random.uniform(-2/n,2/n) )\n for a,b in zip(x,y):\n X.append(np.array([a,b]))\n X = np.array(X)\n return X\n\n# Generate sample\ndef init_board_gauss(N, k):\n n = float(N)/k\n X = []\n for i in range(k):\n c = (random.uniform(-1, 1), random.uniform(-1, 1))\n s = random.uniform(0.05,0.5)\n x = []\n while len(x) < n:\n a, b = np.array([np.random.normal(c[0], s), np.random.normal(c[1], s)])\n # Continue drawing points from the distribution in the range [-1,1]\n if abs(a) < 1 and abs(b) < 1:\n x.append([a,b])\n X.extend(x)\n X = np.array(X)[:N]\n return X\n##### Simulation END ##### \n\n# Identify points for each center.\ndef cluster_points(X, mu):\n clusters = {}\n for x in X:\n bestmukey = min([(i[0], np.linalg.norm(x-mu[i[0]])) \\\n for i in enumerate(mu)], key=lambda t:t[1])[0]\n try:\n clusters[bestmukey].append(x)\n except KeyError:\n clusters[bestmukey] = [x]\n return clusters\n\n# Get closest possible point for each cluster.\ndef closest_point(cluster,possiblePoints):\n closestPoints=[]\n # Check average distance for each point.\n for possible in possiblePoints:\n distances=[]\n for point in cluster:\n distances.append(np.linalg.norm(possible-point))\n closestPoints.append(np.sum(distances)) # minimize total distance\n # closestPoints.append(np.mean(distances))\n return possiblePoints[closestPoints.index(min(closestPoints))]\n\n# Calculate new centers.\n# Here the \'coast constraint\' goes.\ndef reevaluate_centers(clusters,possiblePoints):\n newmu = []\n keys = sorted(clusters.keys())\n for k in keys:\n newmu.append(closest_point(clusters[k],possiblePoints))\n return newmu\n\n# Check whether centers converged.\ndef has_converged(mu, oldmu):\n return (set([tuple(a) for a in mu]) == set([tuple(a) for a in oldmu]))\n\n# Meta function that runs the steps of the process in sequence.\ndef find_centers(X, K, possiblePoints):\n # Initialize to K random centers\n oldmu = random.sample(list(possiblePoints), K)\n mu = random.sample(list(possiblePoints), K)\n while not has_converged(mu, oldmu):\n oldmu = mu\n # Assign all points in X to clusters\n clusters = cluster_points(X, mu)\n # Re-evaluate centers\n mu = reevaluate_centers(clusters,possiblePoints)\n return(mu, clusters)\n\n\nK=3\nX = init_board_gauss(30,K)\npossiblePoints=possible_points()\nresults=find_centers(X,K,possiblePoints)\n\n# Show results\n\n# Show constraints and clusters\n# List point types\npointtypes1=["gx","gD","g*"]\n\nplt.plot(\n np.matrix(possiblePoints).transpose()[0],np.matrix(possiblePoints).transpose()[1],\'m.\'\n )\n\nfor i in list(range(0,len(results[0]))) :\n plt.plot(\n np.matrix(results[0][i]).transpose()[0], np.matrix(results[0][i]).transpose()[1],pointtypes1[i]\n )\n\npointtypes=["bx","yD","c*"]\n# Show all cluster points\nfor i in list(range(0,len(results[1]))) :\n plt.plot(\n np.matrix(results[1][i]).transpose()[0],np.matrix(results[1][i]).transpose()[1],pointtypes[i]\n )\nplt.show()\n编辑以最小化总距离。

\n