如何从图像中提取多个旋转形状?

Ξέν*_*νος 4 python opencv image-processing computer-vision

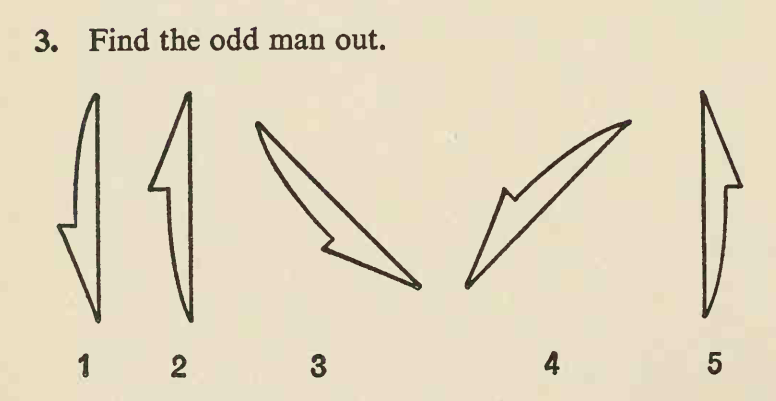

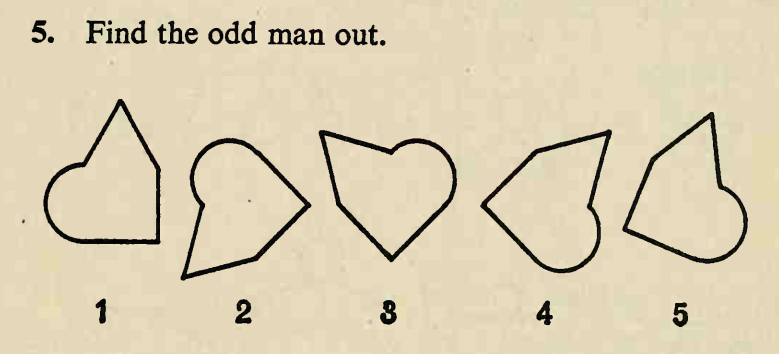

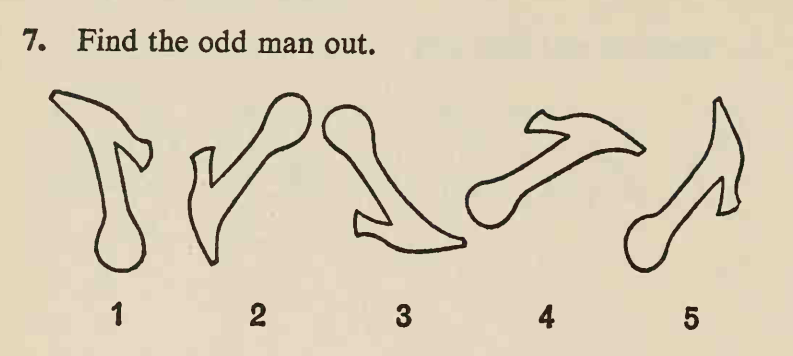

我参加了一个在线视觉智商测试,其中有很多问题如下:

图片地址为:

[f"https://www.idrlabs.com/static/i/eysenck-iq/en/{i}.png" for i in range(1, 51)]

在这些图像中,有几个形状几乎相同且大小几乎相同。这些形状大多数都可以通过旋转和平移从其他形状中获得,但只有一种形状只能通过反射从其他形状中获得,这种形状具有与其他形状不同的手性,这就是“奇怪的人” 。任务就是找到它。

这里的答案分别是 2、1 和 4。我想将其自动化。

而我差一点就成功了。

首先,我下载图像,并使用cv2.

然后我对图像进行阈值处理并反转值,然后找到轮廓。然后我找到最大的轮廓。

现在我需要提取与轮廓相关的形状并使形状直立。这就是我陷入困境的地方,我几乎成功了,但也有一些边缘情况。

我的想法很简单,找到轮廓的最小面积边界框,然后旋转图像使矩形直立(所有边平行于网格线,最长边垂直),然后计算矩形的新坐标,最后使用数组切片来提取形状。

我已经实现了我所描述的目标:

import cv2

import requests

import numpy as np

img = cv2.imdecode(

np.asarray(

bytearray(

requests.get(

"https://www.idrlabs.com/static/i/eysenck-iq/en/5.png"

).content,

),

dtype=np.uint8,

),

-1,

)

def get_contours(image):

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY)

_, thresh = cv2.threshold(gray, 128, 255, 0)

thresh = ~thresh

contours, _ = cv2.findContours(thresh, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)

return contours

def find_largest_areas(contours):

areas = [cv2.contourArea(contour) for contour in contours]

area_ranks = [(area, i) for i, area in enumerate(areas)]

area_ranks.sort(key=lambda x: -x[0])

for i in range(1, len(area_ranks)):

avg = sum(e[0] for e in area_ranks[:i]) / i

if area_ranks[i][0] < avg * 0.95:

break

return {e[1] for e in area_ranks[:i]}

def find_largest_shapes(image):

contours = get_contours(image)

area_ranks = find_largest_areas(contours)

contours = [e for i, e in enumerate(contours) if i in area_ranks]

rectangles = [cv2.minAreaRect(contour) for contour in contours]

rectangles.sort(key=lambda x: x[0])

return rectangles

def rotate_image(image, angle):

size_reverse = np.array(image.shape[1::-1])

M = cv2.getRotationMatrix2D(tuple(size_reverse / 2.0), angle, 1.0)

MM = np.absolute(M[:, :2])

size_new = MM @ size_reverse

M[:, -1] += (size_new - size_reverse) / 2.0

return cv2.warpAffine(image, M, tuple(size_new.astype(int)))

def int_sort(arr):

return np.sort(np.intp(np.floor(arr + 0.5)))

RADIANS = {}

def rotate(x, y, angle):

if pair := RADIANS.get(angle):

cosa, sina = pair

else:

a = angle / 180 * np.pi

cosa, sina = np.cos(a), np.sin(a)

RADIANS[angle] = (cosa, sina)

return x * cosa - y * sina, y * cosa + x * sina

def new_border(x, y, angle):

nx, ny = rotate(x, y, angle)

nx = int_sort(nx)

ny = int_sort(ny)

return nx[3] - nx[0], ny[3] - ny[0]

def coords_to_pixels(x, y, w, h):

cx, cy = w / 2, h / 2

nx, ny = x + cx, cy - y

nx, ny = int_sort(nx), int_sort(ny)

a, b = nx[0], ny[0]

return a, b, nx[3] - a, ny[3] - b

def new_contour_bounds(pixels, w, h, angle):

cx, cy = w / 2, h / 2

x = np.array([-cx, cx, cx, -cx])

y = np.array([cy, cy, -cy, -cy])

nw, nh = new_border(x, y, angle)

bx, by = pixels[..., 0] - cx, cy - pixels[..., 1]

nx, ny = rotate(bx, by, angle)

return coords_to_pixels(nx, ny, nw, nh)

def extract_shape(rectangle, image):

box = np.intp(np.floor(cv2.boxPoints(rectangle) + 0.5))

h, w = image.shape[:2]

angle = -rectangle[2]

x, y, dx, dy = new_contour_bounds(box, w, h, angle)

image = rotate_image(image, angle)

shape = image[y : y + dy, x : x + dx]

sh, sw = shape.shape[:2]

if sh < sw:

shape = np.rot90(shape)

return shape

rectangles = find_largest_shapes(img)

for rectangle in rectangles:

shape = extract_shape(rectangle, img)

cv2.imshow("", shape)

cv2.waitKeyEx(0)

但它并不完美:

正如您所看到的,它包括边界矩形中的所有内容,而不仅仅是由轮廓界定的主要形状,还有一些额外的位插入。我希望形状仅包含由轮廓界定的区域。

然后,更严重的问题是,边界框并不总是与轮廓的主轴对齐,正如您在最后一张图像中看到的那样,它没有直立并且存在黑色区域。

如何解决这些问题?

这是我对这个问题的看法。它基本上涉及处理轮廓本身,而不是实际的光栅图像。使用Hu 矩作为形状描述符,您可以直接计算点数组上的矩。获取两个向量/数组:一个“目标/参考”轮廓,并将其与“目标”轮廓进行比较,寻找两个数组之间的最大(欧几里德)距离。产生最大距离的轮廓是不匹配的轮廓。

\n请记住,轮廓是无序存储的,这意味着目标轮廓(轮廓编号0\xe2\x80\x93 参考)可能从一开始就是不匹配的形状。在这种情况下,我们已经有了该轮廓与其余轮廓之间的“最大距离”,我们需要处理这种情况。提示:检查距离的分布。如果存在\xe2\x80\x99s 真实最大距离,则该距离将大于其余距离,并且可能被识别为异常值。

让\xe2\x80\x99s检查一下代码。第一步是您已有的 \xe2\x80\x93 寻找应用过滤区域的目标轮廓。让\xe2\x80\x99s 保留最大轮廓:

\nimport numpy as np\nimport cv2\nimport math\n\n# Set image path\ndirectoryPath = "D://opencvImages//shapes//"\n\nimageNames = ["01", "02", "03", "04", "05"]\n\n# Loop through the image file names:\nfor imageName in imageNames:\n\n # Set image path:\n imagePath = directoryPath + imageName + ".png"\n\n # Load image:\n inputImage = cv2.imread(imagePath)\n\n # To grayscale:\n grayImage = cv2.cvtColor(inputImage, cv2.COLOR_BGR2GRAY)\n\n # Otsu:\n binaryImage = cv2.threshold(grayImage, 0, 255, cv2.THRESH_OTSU + cv2.THRESH_BINARY_INV)[1]\n\n # Contour list:\n # Store here all contours of interest (large area):\n contourList = []\n\n # Find the contour on the binary image:\n contours, hierarchy = cv2.findContours(binaryImage, cv2.RETR_EXTERNAL, cv2.CHAIN_APPROX_SIMPLE)\n\n for i, c in enumerate(contours):\n\n # Get blob area:\n currentArea = cv2.contourArea(c)\n\n # Set min area:\n minArea = 1000\n\n if currentArea > minArea:\n # Approximate the contour to a polygon:\n contoursPoly = cv2.approxPolyDP(c, 3, True)\n\n # Get the polygon\'s bounding rectangle:\n boundRect = cv2.boundingRect(contoursPoly)\n\n # Get contour centroid:\n cx = int(int(boundRect[0]) + 0.5 * int(boundRect[2]))\n cy = int(int(boundRect[1]) + 0.5 * int(boundRect[3]))\n\n # Store in dict:\n contourDict = {"Contour": c, "Rectangle": tuple(boundRect), "Centroid": (cx, cy)}\n\n # Into the list:\n contourList.append(contourDict)\n到目前为止,我\xe2\x80\x99已经过滤了高于最小值的所有轮廓area。I\xe2\x80\x99ve 在 a 中存储了以下信息dictionary:轮廓本身(点数组)、其边界矩形及其质心。后两者在检查结果时会派上用场。

现在,让\xe2\x80\x99s 计算 hu 矩及其距离。I\xe2\x80\x99ll 将第一个轮廓设置为index 0目标/参考,其余轮廓设置为目标。从中得出的主要结论是,我们正在寻找参考 \xe2\x80\x99s hu 矩数组与目标 \xe2\x80\x93 之间的最大距离,目标 \xe2\x80\x99s 是识别不匹配形状的目标。不过,需要注意的是,特征时刻的规模差异很大。每当您比较事物的相似性时,建议将所有特征缩放在同一范围内。在这种特殊情况下,我\xe2\x80\x99将对所有特征应用幂变换(log)以缩小它们:

# Get total contours in the list:\n totalContours = len(contourList)\n\n # Deep copies of input image for results:\n inputCopy = inputImage.copy()\n contourCopy = inputImage.copy()\n\n # Set contour 0 as objetive:\n currentDict = contourList[0]\n # Get objective contour:\n objectiveContour = currentDict["Contour"]\n\n # Draw objective contour in green:\n cv2.drawContours(contourCopy, [objectiveContour], 0, (0, 255, 0), 3)\n\n # Draw contour index on image:\n center = currentDict["Centroid"]\n cv2.putText(contourCopy, "0", center, cv2.FONT_HERSHEY_SIMPLEX, 1, (255, 0, 0), 2)\n\n # Store contour distances here:\n contourDistances = []\n\n # Calculate log-scaled hu moments of objective contour:\n huMomentsObjective = getScaledMoments(objectiveContour)\n\n # Start from objectiveContour+1, get target contour, compute scaled moments and\n # get Euclidean distance between the two scaled arrays:\n\n for i in range(1, totalContours):\n # Set target contour:\n currentDict = contourList[i]\n # Get contour:\n targetContour = currentDict["Contour"]\n\n # Draw target contour in red:\n cv2.drawContours(contourCopy, [targetContour], 0, (0, 0, 255), 3)\n\n # Calculate log-scaled hu moments of target contour:\n huMomentsTarget = getScaledMoments(targetContour)\n\n # Compute Euclidean distance between the two arrays:\n contourDistance = np.linalg.norm(np.transpose(huMomentsObjective) - np.transpose(huMomentsTarget))\n print("contourDistance:", contourDistance)\n\n # Store distance along contour index in distance list:\n contourDistances.append([contourDistance, i])\n\n # Draw contour index on image:\n center = currentDict["Centroid"]\n cv2.putText(contourCopy, str(i), center, cv2.FONT_HERSHEY_SIMPLEX, 1, (255, 0, 0), 2)\n\n # Show processed contours:\n cv2.imshow("Contours", contourCopy)\n cv2.waitKey(0)\n那\xe2\x80\x99是一个大片段。有几件事:我\xe2\x80\x99定义了getScaledMoments计算 hu 矩的缩放数组的函数,我在帖子末尾展示了这个函数。从这里开始, I\xe2\x80\x99m沿着原始目标轮廓的索引存储目标轮廓与列表中目标之间的距离。这将帮助我识别不匹配的轮廓。contourDistances

I\xe2\x80\x99m 还使用质心来标记每个轮廓。您可以在下面的动画中看到距离计算的过程;参考轮廓以绿色绘制,而每个目标以红色绘制:

\n \n

\n接下来,让\xe2\x80\x99s 得到最大距离。当参考轮廓始终是不匹配的形状时,我们必须处理这种情况。为此,我\xe2\x80\x99将应用一个非常粗略的异常值检测器。这个想法是,与列表的距离contourDistances必须大于其余部分,而其余部分或多或少相同。这里的关键词是变化。I\xe2\x80\x99ll 使用标准差,并且仅当标准偏差高于 1 西格玛时才查找最大距离:

# Get maximum distance,\n # List to numpy array:\n distanceArray = np.array(contourDistances)\n\n # Get distance mean and std dev:\n mean = np.mean(distanceArray[:, 0:1])\n stdDev = np.std(distanceArray[:, 0:1])\n\n print("M:", mean, "Std:", stdDev)\n\n # Set contour 0 (default) as the contour that is different from the rest:\n contourIndex = 0\n # Sigma minimum threshold:\n minSigma = 1.0\n\n # If std dev from the distance array is above a minimum variation,\n # there\'s an outlier (max distance) in the array, thus, the real different\n # contour we are looking for:\n\n if stdDev > minSigma:\n # Get max distance:\n maxDistance = np.max(distanceArray[:, 0:1])\n # Set contour index (contour at index 0 was the objective!):\n contourIndex = np.argmax(distanceArray[:, 0:1]) + 1\n print("Max:", maxDistance, "Index:", contourIndex)\n\n # Fetch dissimilar contour, if found,\n # Get boundingRect:\n boundingRect = contourList[contourIndex]["Rectangle"]\n\n # Get the dimensions of the bounding rect:\n rectX = boundingRect[0]\n rectY = boundingRect[1]\n rectWidth = boundingRect[2]\n rectHeight = boundingRect[3]\n\n # Draw dissimilar (mismatched) contour in red:\n color = (0, 0, 255)\n cv2.rectangle(inputCopy, (int(rectX), int(rectY)),\n (int(rectX + rectWidth), int(rectY + rectHeight)), color, 2)\n cv2.imshow("Mismatch", inputCopy)\n cv2.waitKey(0)\n最后,我只使用边界矩形在脚本开头存储的

\n \n

\n这是getScaledMoments函数:

def getScaledMoments(inputContour):\n """Computes log-scaled hu moments of a contour array"""\n # Calculate Moments\n moments = cv2.moments(inputContour)\n # Calculate Hu Moments\n huMoments = cv2.HuMoments(moments)\n\n # Log scale hu moments\n for m in range(0, 7):\n huMoments[m] = -1 * math.copysign(1.0, huMoments[m]) * math.log10(abs(huMoments[m]))\n\n return huMoments\n以下是一些结果:

\n \n

\n \n

\n \n

\n以下结果是人为的。我手动修改了图像,以实现参考轮廓(位于index = 0)是不匹配轮廓的情况,因此无需寻找最大距离(因为我们已经有了结果):

\n

\n